Integrate

Description

Action Integrate instructs HVR to integrate changes into a database table or file location. Various parameters are available to tune the integration functionality and performance.

If integration is done on file location in channel with table information then any changes are integrated as records in either XML, CSV, AVRO or Parquet format. For details see action FileFormat

Alternatively, a channel can contain only file locations and no table information. In this case, each file captured is treated as a 'blob' and is replicated to the integrate file locations without HVR recognizing its format. If such a 'blob' file channel is defined with only actions Capture and Integrate (no parameters) then all files in the capture location's directory (including files in subdirectories) are replicated to the integrate location's directory. The original files are not touched or deleted, and in the target directory, the original file names and subdirectories are preserved. New and changed files are replicated, but empty subdirectories and file deletions are not replicated.

If a channel is integrating changes into Salesforce, then the Salesforce 'API names' for tables and columns (case–sensitive) must match the 'base names' in the HVR channel. This can be done by defining TableProperties /BaseName actions on each of the tables and ColumnProperties /BaseName actions on each column.

Parameters

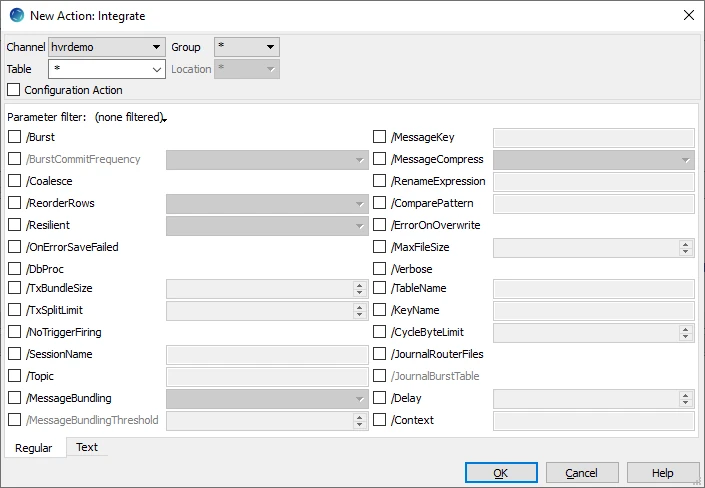

This section describes the parameters available for action Integrate. By default, only the supported parameters available for the selected location class are displayed in the Integrate window.

| Parameter | Argument | Description |

|---|---|---|

| /Burst | Integrate changes into a target table using Burst algorithm. All changes for the cycle are first sorted and coalesced, so that only a single change remains for each row in the target table (see parameter /Coalesce). These changes are then bulk loaded into 'burst tables' named tbl_ _b. Finally, a single set wise SQL statement is done for each operation type (insert, update and delete). The end result is the same as normal integration (called continuous integration) but the order in which the changes are applied is completely different from the order in which they occurred on the capture machine. For example, all changes are done for one table before the next table. This is normally not visible to other users because the burst is done as a single transaction, unless parameter /BurstCommitFrequency is used. If database triggers are defined on the target tables, then they will be fired in the wrong order. This parameter cannot be used if the channel contains tables with foreign key constraints. If this parameter is defined for any table, then it affects all tables integrated to that location. During /Burst integrate, for some databases HVR ‘streams’ data into target databases straight over the network into a bulk loading interface specific for each DBMS (e.g. direct-path-load in Oracle) without storing the data on a disk. For other DBMSs, HVR puts data into a temporary directory (‘staging file') before loading data into a target database. For more information about staging, see section "Burst Integrate and Bulk Refresh" in the respective location class requirements page. /Burst is required for online analytical processing (OLAP) databases, such as Greenpulm, Teradata, Snowflake, Redshift, for better performance during integration. This parameter cannot be used for file locations. A similar effect (reduce changes down to one per row) can be defined with parameter /ReorderRows=SORT_COALESCE. This parameter cannot be used with DbSequence. /Burst Integrate cannot be combined with the CollisionDetect action. | |

| /BurstCommitFrequency | freq | Frequency for committing burst set wise SQL statements. Available options for freq are:

|

| /Coalesce | Causes coalescing of multiple operations on the same row into a single operation. For example, an insert and an update can be replaced by a single insert; five updates can be replaced by one update, or an insert and a delete of a row can be filtered out altogether. The disadvantage of not replicating these intermediate values is that some consistency constraints may be violated on the target database. Parameter /Burst performs a sequence of operations including coalescing. Therefore this parameter should not be used with /Burst. | |

| /ReorderRows | mode | Control order in which changes are written to files. If the target file-name depends on the table name (for example parameter /RenameExpression contains substitution {hvr_tbl_name}) and if the change-stream fluctuates between changes for different tables; then keeping the original order will cause HVR to create lots of small files (a few rows in a file for tab1, then a row for tab2, then another file for tab1 again). This is because HVR does not reopen files after it has closed them. Reordering rows during integration will avoid these 'micro-files'.

|

| /Resilient | mode | Resilient integration of inserts, updates, and deletes. This modifies the behavior of integration if a change cannot be integrated normally. If a row already exists then an insert is converted to an update, an update of a non-existent row is converted to an insert and a delete of a non-existent row is discarded. Existence is checked using the replication key known to HVR (rather than checking the actual indexes or constraints on the target table). Resilience is a simple way to improve replication robustness but the disadvantage is that consistency problems can go undetected. Value mode controls whether an error message is written when this occurs. Additionally, HVR considers an update operation as two parts: before-update and after-update. Before-update contains values before the update happened, and after-update contains new values. If after-update contains missing values, it cannot be converted to an insert. If before-update does not exist on target either, HVR cannot update either. In this case, HVR just skips this operation. This is especially applicable to HANA locations. Available options for mode are:

|

| /OnErrorSaveFailed | On integrate error, write the failed change into 'fail table' tbl__f and then continue integrating other changes. Changes written into the fail table can be retried afterwards (see command Hvrretryfailed). If this parameter is not defined, the default behavior if an integrate error occurs is to write a fatal error and to stop the job. If certain errors occur, then the integrate will no longer fail. Instead, the current file's data will be 'saved' in the file location's state directory, and the integrate job will write a warning and continue processing other replicated files. The file integration can be reattempted (see command Hvrretryfailed). Note that this behavior affects only certain errors, for example, if a target file cannot be changed because someone has it open for writing. Other error types (e.g. disk full or network errors) are still fatal. They will just cause the integrate job to fail. If data is being replicated from database locations and this parameter is defined for any table, then it affects all tables integrated to that location. This parameter cannot be used with parameter /Burst. For Salesforce, this parameter causes failed rows/files to be written to a fail directory ($HVR_CONFIG/work/hub/chn/loc/sf) and an error message to be written which describes how the rows can be retried. | |

| /DbProc | Apply database changes by calling integrate database procedures instead of using direct SQL statements (insert, update and delete). The database procedures are created by hvrinit and called tbl__ii, tbl__iu, tbl__id. This parameter cannot be used on tables with long column data types. This parameter is supported only for certain location types. For the list of supported location types, see Integrate with /DbProc in Capabilities. /DbProc cannot be used on tables with long data types, for example, long varchar or blob. | |

| /TxBundleSize | int | Bundle small transactions together for improved performance. The default transaction bundle size is 100. For example, if the bundle size is 10 and there were 5 transactions with 3 changes each, then the first 3 transactions would be grouped into a transaction with 9 changes and the others would be grouped into a transaction with 6 changes. Transaction bundling does not split transactions. If this parameter is defined for any table, it affects all tables integrated to that location. /TxBundleSize can only be used in combination with the continuous integration mode (without /Burst) for performance tuning. This parameter is disabled when /Burst is selected. |

| /TxSplitLimit | int | Split very large transactions to limit resource usage. The default is 0, which means transactions are never split. For example, if a transaction on the master database affected 10 million rows and the remote databases has a small rollback segment then if the split limit was set to 1000000 the original transaction would split into 10 transactions of 1 million changes each. If this parameter is defined for any table, it affects all tables integrated to that location. /TxSplitLimit can only be used in combination with the continuous integration mode (without /Burst). This parameter is disabled when /Burst is selected. |

/NoTriggerFiring | Enable or disable database triggers during integrate. This parameter is supported only for certain location classes. For the list of supported location classes, see Disable/enable database triggers during integrate (/NoTriggerFiring) in Capabilities. For Ingres, this parameter disables the firing of all database rules during integration. This is done by performing SQL statement set norules at connection startup. For SQL Server, this parameter disables the firing of database triggers, foreign key constraints, and check constraints during integration if those objects were defined with not for replication. This is done by connecting to the database with the SQL Server Replication connection capability. A disadvantage of this connection type is that the database connection string must have form host,port instead of form \\host\instance. This port needs to be configured in the Network Configuration section of the SQL Server Configuration Manager. Another limitation is that encryption of the ODBC connection is not supported if this parameter is used for SQL Server. For Oracle and SQL Server, Hvrrefresh will automatically disable triggers on target tables before the refresh and re-enable them afterwards, unless option -q is defined. Other ways to control trigger firing are described in Managing Recapturing Using Session Names. | |

| /SessionName | sess_name | Integrate changes with specific session name. The default session name is hvr_integrate. Capture triggers/rules check the session name to avoid recapturing changes during bidirectional replication. For a description of recapturing and session names see Managing Recapturing Using Session Names and Capture. If this parameter is defined for any table, then it affects all tables integrated to that location. |

/Topic Kafka | expression | Name of the Kafka topic. You can use strings/text or expressions as Kafka topic name. Following are the expressions to substitute capture location or table or schema name as topic name:

|

/MessageBundling Kafka | mode | Number of messages written (bundled) into single Kafka message. Regardless of the file format chosen, each Kafka message contains one row by default. Available options for mode are:

Note that Confluent's Kafka Connect only allows certain message formats and does not allow any message bundling, therefore /MessageBundling must either be undefined or set to ROW. Bundled messages simply consist of the contents of several single-row messages concatenated together. When the mode is TRANSACTION or THRESHOLD and if the name of the Kafka topic contains an expression such as {hvr_tbl_name} then the rows of different tables will not be bundled into the same message. |

/MessageBundlingThreshold Kafka | int | The threshold for bundling rows in a Kafka message. The default value is 800,000 bytes. This parameter is applicable only for the message bundling modes TRANSACTION and THRESHOLD. For those bundling modes, rows continue to be bundled into the same message until this threshold is exceeded. After that happens the message is sent and new rows are bundled into the next message. By default the maximum size of a Kafka message is 1,000,000 bytes; HVR will not send a message exceeding this size and will instead give a fatal error. You can change the maximum Kafka message size that HVR will send by defining $HVR_KAFKA_MSG_MAX_BYTES but ensure not to exceed the maximum message size configured in Kafka broker (settings message.max.bytes). If the message size exceeds this limit then message will be lost. |

/MessageKey Kafka | expression | Expression to generate user-defined key in a Kafka message. An expression can contain constant strings mixed with substitutions. When /MessageKey is not defined and FileFormat/Json or FileFormat/Avro (option Schema Registry (Avro) in Kafka location connection is not selected) is defined, the default value for /MessageKey is {"schema":string, "payload": {hvr_key_hash}} Possible substitutions include:

It is recommended to define /Context when using the substitutions {hvr_var_xxx}, {hvr_slice_num}, {hvr_slice_total}, or {hvr_slice_value}, so that it can be easily disabled or enabled. {hvr_slice_num}, {hvr_slice_total}, {hvr_slice_value} cannot be used if the one of the old slicing substitutions {hvr_var_slice_condition}, {hvr_var_slice_num}, {hvr_var_slice_total}, or {hvr_var_slice_value} is defined in the channel/table involved in the compare/refresh. |

/MessageKeySerializer

Kafka | format | HVR will encode the generated Kafka message key in a string, Kafka Avro or JSON serialization format. Available options for the format are:

|

/MessageCompress

Kafka | algorithm | HVR will configure the Kafka transport protocol to compress message sets transmitted to Kafka broker using one of the available algorithms. The compression allows to decrease the network latency and save disk space on the Kafka broker. Each message set can contain more than one Kafka message and the size of the message set will be less than $HVR_KAFKA_MSG_MAX_BYTES. For more information, see Kafka Message Bundling and Size. Available options for the algorithm are:

LZ4 compression is not supported on the Windows platform if Kafka broker version is less than 1.0. |

| /RenameExpression | expression | Expression to name new files. A rename expression can contain constant strings mixed with substitutions. When /RenameExpression is not defined -

Possible substitutions include:

It is recommended to define /Context when using the substitutions {hvr_var_xxx}, {hvr_slice_num}, {hvr_slice_total}, or {hvr_slice_value}, so that it can be easily disabled or enabled. {hvr_slice_num}, {hvr_slice_total}, {hvr_slice_value} cannot be used if the one of the old slicing substitutions {hvr_var_slice_condition}, {hvr_var_slice_num}, {hvr_var_slice_total}, or {hvr_var_slice_value} is defined in the channel/table involved in the compare/refresh. |

/ComparePattern | patt | Perform direct file compare. During compare, HVR reads and parses (deserializes) files directly from a file location instead of using HIVE external tables (even if it is configured for that location). While performing direct file compare, the files of each table are distributed to pre-readers. The file 'pre-read' subtasks generate intermediate files. The location for these intermediate files can be configured using LocationProperties/IntermediateDirectory. To configure the number of pre-read subtasks during compare use hvrcompare with option -w. HVR can parse only CSV or XML file formats and does not support Avro, Parquet or JSON. This parameter can only be used on file locations. Example: {hvr_tbl_name}/**/*.csv To perform direct file compare, option Generate Compare Event (-e) should be selected in command hvrcompare. |

| /ErrorOnOverwrite | Error if a new file has same name as an existing file. If data is being replicated from database locations and this parameter is defined for any table, then it affects all tables integrated to that location. | |

| /MaxFileSize | int | The threshold (in bytes) for bundling rows in a file. The rows are bundled into the same file until after this threshold is exceeded. After that happens, the file is finalized/closed and HVR will start writing rows to a new file. The files written by HVR always contain at least one row, which means that specifying a very low size such as 1 byte will cause each file to contain a single row. For efficiency reasons HVR's decision to start writing a new file depends on the length of the previous row, not the current row. This means that sometimes the actual file size may slightly exceed the value specified. If data is being replicated from database locations and this parameter is defined for any table, then it affects all tables integrated to that location. This parameter cannot be used for 'blob' file channels which contain no table information and only replicated files as 'blobs'. |

| /Verbose | The file integrate job will write extra information, including the name of each file which is replicated. Normally, the job only reports the number of files written. If data is being replicated from database locations and this parameter is defined for any table, then it affects all tables integrated to that location. | |

| /TableName | userarg | API name of Salesforce table into which attachments should be uploaded. See section Salesforce Attachment Integration below. |

| /KeyName | userarg | API name of key in Salesforce table for uploading attachments. See section Salesforce Attachment Integration below. |

| /CycleByteLimit | int | Maximum amount (in bytes) of compressed router files to process per integrate cycle. The default value is 10000000 bytes (10 MB). Since HVR 5.6.5/13, if /Burst parameter is defined, the default value is 100000000 bytes (100 MB). Value 0 means unlimited, so the integrate job will process all available work in a single integrate cycle. If more than this amount of data is queued for an integrate job, then it will process the work in 'sub cycles'. The benefit of 'sub cycles' is that the integrate job won't last for hours or days. If the /Burst parameter is defined, then large integrate cycles could boost the integrate speed, but they may require more resources (memory for sorting and disk room in the burst tables tbl_ _b). If the supplied value is smaller than the size of the first transaction file in the router directory, then all transactions in that file will be processed. Transactions in a transaction file will never be split between cycles or sub-cycles. If this parameter is defined for any table, then it affects all tables integrated to that location. |

| /JournalRouterFiles | Move processed transaction files to journal directory $HVR_CONFIG/jnl/hub/chn/YYYYMMDD on the hub machine. Normally an integrate job would just delete its processed transactions files. The journal files are compressed, but their contents can be viewed using command hvrrouterview. Old journal files can be purged with command hvrmaint journal_keep_days=N. If this parameter is defined for any table, then it affects all tables integrated to that location. | |

/JournalBurstTableSince v5.3.1/21 | Keep track of changes in the burst table during /Burst integrate. If this option is enabled, HVR will create a copy of the burst table in a separate audit table with some extra metadata like time-stamp, burst SQL statement etc. This is useful to easily recover in case of a failure during /Burst integrate. | |

| /Delay | N | Delay integration of changes for N seconds |

| /Context | ctx | Ignore action unless refresh/compare context ctx is enabled. The value should be the name of a context (a lowercase identifier). It can also have form |ctx, which means that the action is effective unless context ctx is enabled. One or more contexts can be enabled for HVR Compare or Refresh (on the command line with option –Cctx). Defining an action which is only effective when a context is enabled can have different uses. For example, if action Integrate /RenameExpression /Context=qqq is defined, then the file will only be renamed if context qqq is enabled (option -Cqqq). |

Columns Changed During Update

If an SQL update is done to one column of a source table, but other columns are not changed, then normally the update statement performed by HVR integrate will only change the column named in the original update. However, all columns will be overwritten if the change was captured with Capture /NoBeforeUpdate.

There are three exceptional situations where columns will never be overwritten by an update statement:

- If ColumnProperties /NoUpdate is defined;

- If the column has a LOB data type and was not change in the original update;

- If the column was not mentioned in the channel definition.

Controlling Trigger Firing

Sometimes during integration it is preferable for application triggers not to fire. This can be achieved by changing the triggers so that they check the integrate session (for example where userenv('CLIENT_INFO') <>'hvr_integrate'). For more information, see section Application triggering during integration in Capture.

For Ingres target databases, database rule firing can be prevented by specifying Integrate /NoTriggerFiring or with hvrrefresh option –f.

SharePoint Version History

HVR can replicate to and from a SharePoint/WebDAV location which has versioning enabled. By default, HVR's file integrate will delete the SharePoint file history, but the file history can be preserved if action LocationProperties /StateDirectory is used to configure a state directory (which is located on the HVR machine, outside SharePoint). Defining a /StateDirectory outside SharePoint does not impact the 'atomicity' of file integrate, because this atomicity is already supplied by the WebDAV protocol.

Salesforce Attachment Integration

Attachments can be integrated into Salesforce.com by defining a 'blob' file channel (without table information) which captures from a file location and integrates into a Salesforce location. In this case, the API name of the Salesforce table containing the attachments can be defined either with Integrate /TableName or using 'named pattern' {hvr_sf_tbl_name} in the Capture /Pattern parameter. Likewise, the key column can be defined either with Integrate /KeyName or using 'named pattern' {hvr_sf_key_name}. The value for each key must be defined with 'named pattern' {hvr_sf_key_value}.

For example, if the photo of each employee is named id.jpg, and these need to be loaded into a table named Employee with key column EmpId, then action Capture /Pattern="{hvr_sf_key_value}.jpg" should be used with action Integrate /TableName="Employee" /KeyName="EmpId".

- All rows integrated into Salesforce are treated as 'upserts' (an update or an insert). Deletes cannot be integrated.

- Salesforce locations can only be used for replication jobs; HVR Refresh and HVR Compare are not supported.

Timestamp Substitution Format Specifier

Timestamp substitution format specifiers allows explicit control of the format applied when substituting a timestamp value. These specifiers can be used with {hvr_cap_tstamp[spec]}, {hvr_integ_tstamp[spec]}, and {colname [spec]} if colname has timestamp data type. The components that can be used in a timestamp format specifier spec are:

| Component | Description | Example |

|---|---|---|

| %a | Abbreviate weekday according to current locale. | Wed |

| %b | Abbreviate month name according to current locale. | Jan |

| %d | Day of month as a decimal number (01–31). | 07 |

| %H | Hour as number using a 24–hour clock (00–23). | 17 |

| %j | Day of year as a decimal number (001–366). | 008 |

| %m | Month as a decimal number (01 to 12). | 04 |

| %M | Minute as a decimal number (00 to 59). | 58 |

| %s | Seconds since epoch (1970–01–01 00:00:00 UTC). | 1099928130 |

| %S | Second (range 00 to 61). | 40 |

| %T | Time in 24–hour notation (%H:%M:%S). | 17:58:40 |

| %U | Week of year as decimal number, with Sunday as first day of week (00 – 53). | 30 |

%VLinux | The ISO 8601 week number, range 01 to 53, where week 1 is the first week that has at least 4 days in the new year. | 15 |

| %w | Weekday as decimal number (0 – 6; Sunday is 0). | 6 |

| %W | Week of year as decimal number, with Monday as first day of week (00 – 53) | 25 |

| %y | Year without century. | 14 |

| %Y | Year including the century. | 2014 |

| %[localtime] | Perform timestamp substitution using machine local time (not UTC). This component should be at the start of the specifier (e.g. \{{hvr_cap_tstamp %[localtime]%H}}). | |

| %[utc] | Perform timestamp substitution using UTC (not local time). This component should be at the start of the specifier (e.g. \{{hvr_cap_tstamp %[utc]%T}}). |